Good Enough at Scale Beats Exact at Never

Analyze massive data accurately at low cost and high performance

TL;DR — Modern systems collect more data than we can afford to analyze exactly. Sketches are a family of algorithms that answer questions like “have I seen this key?”, “what are the keys with the top 10 values?”, or “what’s the median and p90 value?” using a fraction of the memory and time of exact approaches, with accuracy guarantees that are good enough for most real-world use cases.

You’re staring at your observability dashboard, trying to answer a simple question: what are the top 10 nodes consuming the most CPU right now? The system is ingesting millions of metrics every minute. The data is there, but getting to it efficiently is the real problem.

This is the kind of question that should take milliseconds. At scale, it can take upto minutes, or even fail entirely. This problem is not specific to observability. The same wall shows up in network monitoring, real-time analytics, anomaly detection — any modern pipeline where data arrives faster than it can be processed exactly.

ProjectASAP is building the next generation of data infrastructure for exactly this problem. This blog is about one of the core ideas behind it: sketches. Sketches are a family of algorithms that answer questions like “have I seen this key?”, “what are the keys with top 10 values?”, or “what’s the p90 value?” using a fraction of the memory and latency of exact approaches, with accuracy guarantees that are good enough to drive production use cases.

The Problem

Many domains, like observability and network monitoring, collect far more data than anyone can store or scan exactly. Analyzing it the naive way is prohibitively slow, expensive, and often impossible at scale.

To understand how sketches work, consider a different kind of data problem.

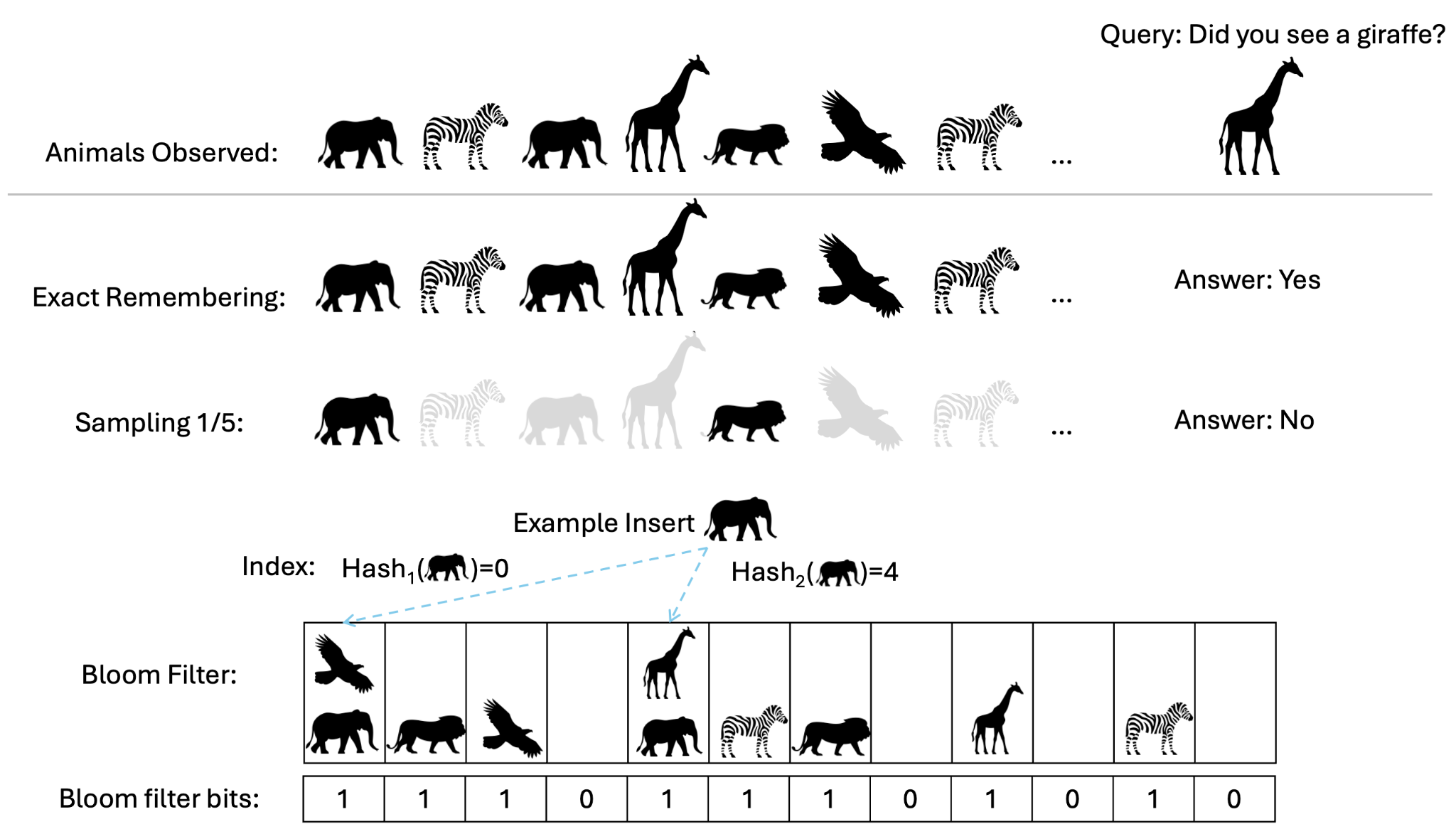

You’re on a wildlife safari in Africa. Over the course of a day you see hundreds of animals — zebras, elephants, lions, and the occasional giraffe. At the end of the day, your friend asks: “Did you see a giraffe?”. A simple question; but after hundreds of animals, you can’t remember every sighting in your head.

Your next best bet is to sample the data. So the next time you go on a safari, you try to rememeber only every fith animal (sampling rate = 1/5), and hope that that’s a good enough sample to answer your friend’s questions. However, you may very well drop the occasional giraffe from your samples. Now you go and tell your friend that you didn’t see a giraffe but actually you had, you just dropped it while sampling. Not a satisfying outcome.

Sketches take a different approach to this problem. Instead of remembering the animals themselves (or a sample thereof), you ingest each animal into a summary, called a sketch. This compact sketch-based summary is a data structure that captures just enough information of the animals you’ve seen so far, to answer your friend’s questions relaibly. At the same time, it uses a tiny fraction of memory compared to remembering all the animals you’ve seen.

The Key Idea

When you don’t need a perfect forensic answer, approximate answers from sketches can cut memory and query latency by orders of magnitude, while still being accurate enough to drive real decisions.

Bloom Filters: Did I See It?

You need a way to record each animal as you see it, and later answer “did I see a giraffe?”, without storing each animal you encountered.

To do this, let’s revisit a classical idea from the 1970s — the Bloom filter1. Bloom filters are a type of sketch built to answer set-membership queries: has a particular item (like a giraffe) been seen or not?

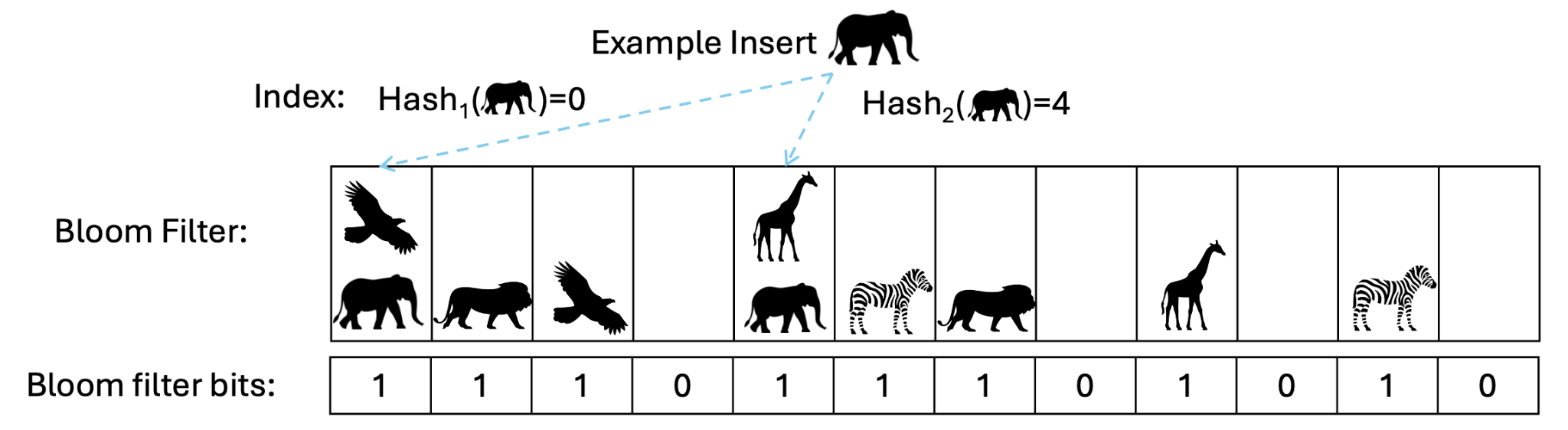

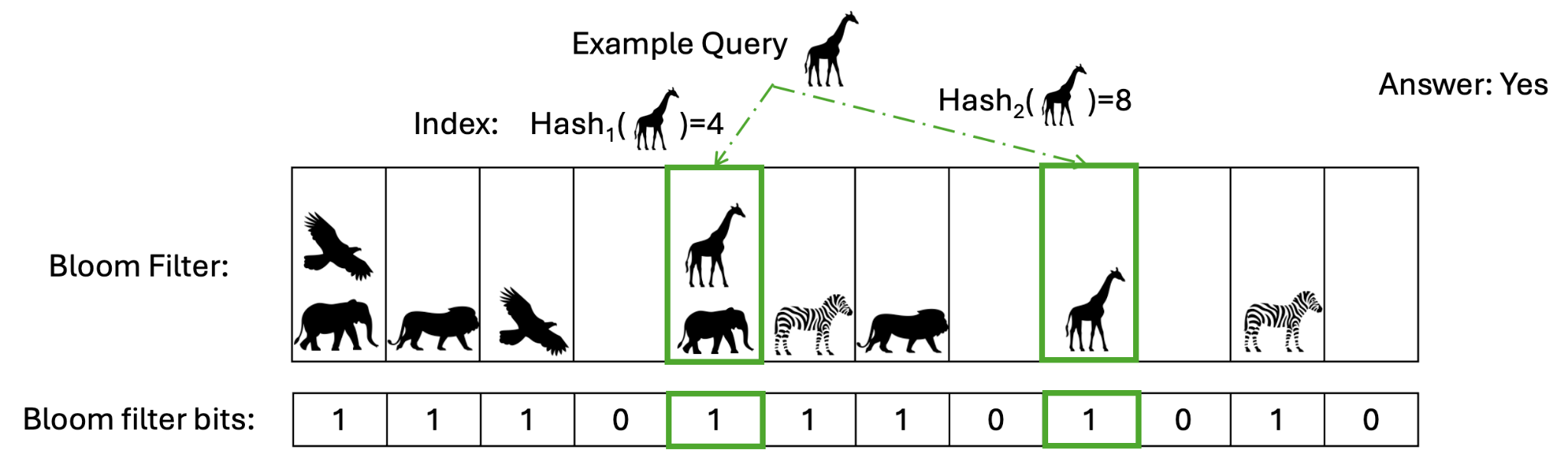

A Bloom filter does this with a fixed-size array of bits, all initialized to zero. When you see a new animal, you run it through k hash functions, each producing an index into the array, and set those bits to 1. Do this for every animal you see. To check later whether you saw a giraffe, run it through the same hash functions and look at the resulting bits. If any bit is 0, the giraffe was definitely not seen. If all bits are 1, it was almost certainly seen.

Bloom filters never produce false negatives. If the filter says no, the answer is no. They can produce false positives i.e. saying yes when the answer is actually no; but the false positive rate is tunable. A larger bit array reduces collisions between different animals. The number of hash functions k has an optimal value for a given array size and item count. As a concrete example, storing 1 million items with a 1% false positive rate requires roughly 1.14 MB and 7 hash functions, compared to tens of megabytes for an exact hash set2.

Back to the observability dashboard: this is exactly how high-throughput systems deduplicate metric keys at ingestion. Rather than checking a full index of every seen metric, prohibitively expensive at millions of events per minute, a Bloom filter gives you a near-instant, memory-efficient answer. The occasional false positive just results in a redundant write, but maintains correctness.

Count-Min Sketch: How Often, and What’s Most Common?

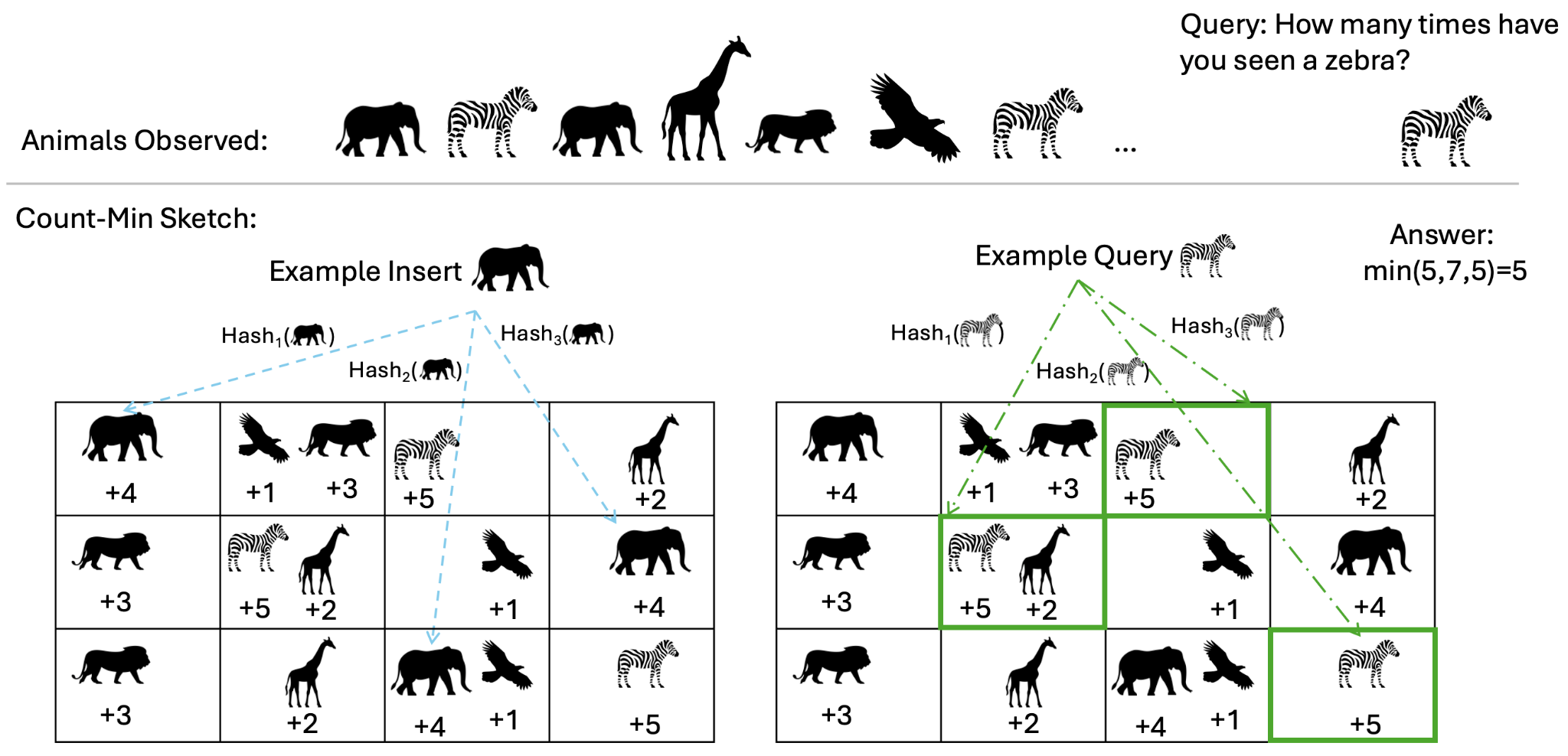

Your friend asks you two more questions about the safari: “How many times did you see a zebra?” and “What were the 3 most common animals you saw?”

The obvious approach is to keep a running count per animal, updating it as you go. We hit the same wall as before: tracking so many unique animals and their frequencies is impractical at scale.

Sampling doesn’t rescue us here either. Rare animals may disappear entirely, and even for common ones, sampling introduces large variance, making it unreliable for finding the most frequent items. Suppose you saw zebras 5 times, elephants 4 times, lions 3 times, giraffes twice, and an eagle once. The true top 3 are zebras, elephants, and lions. But if you sample 1 in every 5 animals, you might end up with 1 zebra, 1 lion, and 1 giraffe — landing on a completely wrong answer.

The Count-Min Sketch3 solves this with a 2D array of integer counters. Each row of the array row is tied to a distinct hash function. When you see an animal, you hash it once per row and increment the corresponding counter. To estimate an animal’s frequency later, you hash it again through the same functions and take the minimum of the counters it lands on. For instance, if the counters read (5, 7, 5), you estimate the frequency as 5.

Hash collisions in the 2D array mean that multiple animals can map to the same array counter, artificially inflating the count of animals. That’s exactly why the sketch uses multiple rows. Collisions are unlikely to happen consistently across all hash functions, so taking the minimum suppresses the impact of overcounting. The two dimensions of the sketch control different aspects of accuracy: more columns reduce the magnitude of overcount error by spreading items more thinly, while more rows reduce the probability that any row’s count is inflated at all.

To track the top-3 most frequent animals, we pair the Count-Min Sketch with a small min-heap. As animal observations stream in, we update the sketch and maintain a heap of the current top-k candidates based on their estimated counts. This gives us a continuously-updated approximate top-k without ever storing the full list of animals.

Back to the observability dashboard: this is how you answer “what are the top 10 nodes by CPU usage?” across millions of metrics per minute, without maintaining an exact sorted index of every node. The Count-Min Sketch tracks estimated frequencies in fixed memory and the heap tracks the nodes with heaviest CPU usage. An occasional misranking at the boundary is a practical tradeoff to make in return for a query that runs with a tiny memory footprint and low latency.

Not Just a One-Trick Pony

Bloom filters and Count-Min are just two examples. There are sketches for cardinality (HyperLogLog4), quantiles (t-digest5, KLL6), set similarity (MinHash7), and more. Whatever summary statistic you need, there’s likely a sketch for it.

Beyond the Bloom Filter and Count-Min Sketch

The Bloom filter and Count-Min Sketch are two specific instances of a broader family of sketches that trade-off a small amount of accuracy for massive gains in efficiency. The same idea we’ve discussed above extends to other kinds of queries and analytics. The HyperLogLog sketch can estimate cardinality i.e. “how many distinct animals did you see today?”, while KLL and t-digest can estimate percentiles such as “what was the median sighting duration in the safari”. Whatever aggregate analytics your pipeline needs, there is almost certainly a sketch for it.

Good-Enough Outperforms Exact at Production Scale

The safari example is useful to understand the intuition of sketches, but the stakes in production are higher than remembering giraffes.

In metrics observability, operators constantly ask questions like “what are the top 10 nodes with the highest CPU usage?” or “what’s the p90 latency for this service over the last 10 minutes?” As deployments scale to millions of nodes and containers, exact answers to these questions become prohibitively expensive — slow to compute, costly to store, and increasingly hard to serve in real time. Sketches reduce these to fixed-memory, constant-time operations without meaningfully sacrificing the accuracy operators actually need to make decisions.

The same holds for tasks like network monitoring and log aggregations. In the former, traffic volumes make per-flow tracking impractical; while in the latter, finding the most frequent error types across billions of log lines is exactly the kind of query that breaks exact approaches and where a Count-Min Sketch with a min-heap is your best bet to maximize efficient while being good enough.

At sufficient scale, exact answers are slow, expensive, and often unnecessary. Approximate answers, with known and bounded error, are fast, cheap, and good enough to drive real decisions. We truly believe that “good enough” isn’t a compromise here, it’s the approach that scales.

The Quantitative Backing

To ground this concretely, we benchmarked three sketch families against their exact baselines — a HashMap for frequency, an exact set for cardinality, and a sorted array for quantiles — on a workload of 10 million data points with 100K distinct keys under a Zipf(1.1) distribution, which reflects the skewed frequency patterns typical of real observability and analytics workloads.

We see that sketches deliver multiple orders-of-magnitude savings in memory while keeping error bounded and small — up to roughly 21,000× smaller for KLL quantiles, 63× for HyperLogLog cardinality, and 2.5× for Count-Min frequency on this workload. The accuracy figures are 100% − relative error against the exact baseline for each sketch’s primary query type: frequency estimation for Count-Min, cardinality for HyperLogLog, and quantile estimation for KLL. In each case the error is bounded and configurable — a larger sketch pushes accuracy closer to 100%, at a predictable memory cost.

| Sketch | Memory lower is better | Accuracy higher is better |

|---|---|---|

| CMS | 2.5× smaller (1.86 MB → 768 KB) | 97.21% |

| HLL | 63× smaller (0.98 MB → 16 KB) | 99.635% |

| KLL | ~21,000× smaller (128 MB → 6.25 KB) | 99.599% |

Insert and query throughput stayed at parity or better than the exact baselines across all three sketches.

What’s Next

We’ll soon release ProjectASAP’s easy-to-use, high-performance library of sketches, along with tutorials on applying it to real workloads — including time-series metrics for observability and network monitoring. Stay tuned!

-

B. H. Bloom, “Space/time trade-offs in hash coding with allowable errors,” Communications of the ACM, vol. 13, no. 7, pp. 422–426, 1970. https://doi.org/10.1145/362686.362692 ↩

-

Bloom Filter Calculator — https://hur.st/bloomfilter/ ↩

-

G. Cormode and S. Muthukrishnan, “An improved data stream summary: the Count-Min Sketch and its applications,” Journal of Algorithms, vol. 55, no. 1, pp. 58–75, 2005. https://doi.org/10.1016/j.jalgor.2003.12.001 ↩

-

P. Flajolet, É. Fusy, O. Gandouet, and F. Meunier, “HyperLogLog: the analysis of a near-optimal cardinality estimation algorithm,” in Proc. Conf. Analysis of Algorithms (AofA), DMTCS Proceedings, vol. AH, pp. 137–156, 2007. https://doi.org/10.46298/dmtcs.3545 ↩

-

T. Dunning, “The t-digest: Efficient estimates of distributions,” Software Impacts, vol. 7, p. 100049, 2021. https://doi.org/10.1016/j.simpa.2020.100049 ↩

-

Z. Karnin, K. Lang, and E. Liberty, “Optimal Quantile Approximation in Streams,” in Proc. IEEE FOCS, pp. 71–78, 2016. https://doi.org/10.1109/FOCS.2016.17 ↩

-

A. Z. Broder, “On the resemblance and containment of documents,” in Proc. Compression and Complexity of Sequences (SEQUENCES 1997), pp. 21–29, 1998. https://doi.org/10.1109/SEQUEN.1997.666900 ↩